* feat(browse): SOCKS5 bridge with auth + cred redaction helper

Adds browse/src/socks-bridge.ts: a 127.0.0.1-only SOCKS5 listener that

accepts unauthenticated connections from Chromium and relays them through

an authenticated upstream proxy. Chromium does not prompt for SOCKS5 auth

at launch, so this bridge is the workaround for using auth-required

residential SOCKS5 upstreams.

- startSocksBridge({ upstream, port: 0 }) → ephemeral 127.0.0.1 listener

- testUpstream({ upstream, retries: 3, backoffMs: 500, budgetMs: 5000 })

pre-flight that connects to a known endpoint (default 1.1.1.1:443)

- Stream-error policy: kill affected client + upstream sockets on any

error mid-stream; no transport retries (a transport-layer retry can

corrupt browser traffic)

Adds browse/src/proxy-redact.ts: single source of truth for redacting

credentials in any logged proxy URL or upstream config. Every code path

that prints proxy config goes through this helper.

Adds the socks npm dep (~30KB) and 16 tests covering: 127.0.0.1-only

bind, byte-for-byte round trip through the bridge, auth rejection,

mid-stream upstream drop kills client conn, listener teardown,

testUpstream success + retry-exhaust paths, redaction of every

credential shape.

Co-Authored-By: Claude Opus 4.7 (1M context) <noreply@anthropic.com>

* feat(browse): --proxy and --headed flags wire bridge into daemon

Adds the global --proxy <url> and --headed flags to the browse CLI.

Resolves cred policy and routes the daemon launch through the SOCKS5

bridge (or pass-through for HTTP/HTTPS) before chromium.launch().

CLI (cli.ts):

- extractGlobalFlags() strips --proxy/--headed from argv, parses URL via

Node URL class, validates D9 cred-mixing (env BROWSE_PROXY_USER/PASS

+ URL creds → exit 1 with hint), composes canonical proxy URL with

resolved creds, computes a stable configHash for daemon-mismatch

- ensureServer() now reads existing daemon's configHash from state file

and refuses (exit 1 with disconnect hint) if --proxy/--headed mismatch

the existing daemon. No silent restart that would drop tab state.

- All proxy-related stderr lines go through redactProxyUrl

proxy-config.ts (new):

- parseProxyConfig() — URL parser + D9 cred-mixing detector + scheme allowlist

- computeConfigHash() — stable hash of (proxy URL minus creds + headed flag)

- toUpstreamConfig() — map ParsedProxyConfig → socks-bridge.UpstreamConfig

Server (server.ts):

- Reads BROWSE_PROXY_URL at startup; for SOCKS5+auth, runs testUpstream

pre-flight (5s budget, 3 retries, 500ms backoff) and exits 1 on failure

with redacted error

- Spawns startSocksBridge() on 127.0.0.1:<ephemeral> and points

Chromium at it via socks5://127.0.0.1:<port>

- HTTP/HTTPS or unauth SOCKS5 → pass-through to chromium.launch

proxy.server (with username/password if present)

- State file gains optional configHash for daemon-mismatch check

- Bridge tears down via process.on('exit')

Browser manager (browser-manager.ts):

- New setProxyConfig({ server, username, password }) called by server.ts

before launch

- chromium.launch() and both launchPersistentContext sites pass the

proxy config through when set

Tests: 22 new across proxy-config (parse + cred-mixing + hash stability)

and extractGlobalFlags (flag stripping + cred-mixing rejection + cred

rotation hash stability + redaction).

Co-Authored-By: Claude Opus 4.7 (1M context) <noreply@anthropic.com>

* feat(browse): Xvfb auto-spawn with PID + start-time validation

Adds browse/src/xvfb.ts: a Linux-only Xvfb auto-spawn module for

running headed Chromium in containers without DISPLAY. The module

walks a display range to pick a free one (never hardcodes :99) and

validates orphan PIDs by BOTH /proc/<pid>/cmdline matching 'Xvfb' AND

start-time matching the recorded value before sending any signal.

Defends against PID reuse — refuses to kill anything that doesn't

match both checks.

- shouldSpawnXvfb(env, platform) — pure decision: skip on macOS/Windows,

on Linux skip when DISPLAY or WAYLAND_DISPLAY is set (codex F2)

- pickFreeDisplay(99..120) — probes via xdpyinfo

- spawnXvfb(display) — returns { pid, startTime, display } handle

- isOurXvfb(pid, startTime) — both-checks validator

- cleanupXvfb(state) — best-effort, validates ownership before SIGTERM

Wired into server.ts startup: when shouldSpawnXvfb says yes, picks a

free display, spawns Xvfb, sets DISPLAY for chromium.launchHeaded, and

records xvfbPid/xvfbStartTime/xvfbDisplay in the state file. Cleanup

runs on process.on('exit'). The CLI's disconnect path also runs

cleanupXvfb() in the force-cleanup branch when the server is dead.

Disconnect now applies to any non-default daemon (headed mode OR

configHash-tagged daemon — i.e. one started with --proxy/--headed),

not just headed mode.

Adds xvfb + x11-utils to .github/docker/Dockerfile.ci so CI exercises

the Linux container --headed path on every run. Without it the most

common production path would go untested.

Tests: 17 new across decision logic, PID validation defenses

(cmdline mismatch, start-time mismatch), no-op safety on bad inputs,

and a Linux+Xvfb-installed gate for the spawn → validate → cleanup

round trip. Tests skip on macOS/Windows automatically.

Co-Authored-By: Claude Opus 4.7 (1M context) <noreply@anthropic.com>

* feat(browse): webdriver-mask stealth + Chromium-through-bridge e2e

D7 (codex narrowing): mask navigator.webdriver only via addInitScript.

The wintermute approach (fake plugins=[1..5], fake languages=['en-US',

'en'], stub window.chrome) is intentionally NOT applied — modern

fingerprinters check consistency between plugins.length, languages,

userAgent, and platform, and synthesizing fixed values can flag MORE

bot-like, not less. The honest minimum is webdriver, which Chromium

exposes as a known automation tell.

Adds browse/src/stealth.ts: single source of truth for the stealth

init script and launch args. Both browser-manager.launch() (headless)

and launchHeaded() (persistent context with extension) call

applyStealth(context) and pass STEALTH_LAUNCH_ARGS into chromium.launch.

The pre-existing launchHeaded stealth that did fake plugins/languages

is removed for the same reason. The cdc_/__webdriver runtime cleanup

and Permissions API patch are kept — they remove automation-injected

artifacts, not synthesize fake natural-browser values.

Adds bridge-chromium-e2e.test.ts (codex F3): the test that proves the

FEATURE works. Real Chromium with proxy.server = 'socks5://127.0.0.1:

<bridgePort>' navigates to a local HTTP fixture; the auth upstream's

connect counter and the HTTP fixture's hit counter both increment,

proving traffic actually traversed bridge → auth-upstream → destination.

Without this test, we could ship a working byte-relay and a broken

Chromium integration and never know.

Adds bridge-port-restart.test.ts (codex F1, reframed): old test

assumed two daemons coexist, which contradicts D2 single-daemon model.

Reframed as restart-then-restart, asserting fresh ephemeral ports

(never the hardcoded 1090) on each spin-up.

Adds stealth-webdriver.test.ts: navigator.webdriver=false in both

fresh contexts and persistent contexts; navigator.plugins/languages

are NOT replaced with the wintermute fake list (D7 verification).

Co-Authored-By: Claude Opus 4.7 (1M context) <noreply@anthropic.com>

* feat(gstack): generate llms.txt — single-file capability index for AI agents

Adds scripts/gen-llms-txt.ts: produces gstack/llms.txt at repo root,

indexing every skill (47), every browse command (75), and design

commands when the design CLI is present. Per the llmstxt.org

convention, agents can read one file to learn what gstack offers

instead of crawling 47 SKILL.md files.

Sources:

- skill SKILL.md.tmpl frontmatter (name + description block scalar)

- browse/src/commands.ts COMMAND_DESCRIPTIONS (sorted by category)

- design/src/commands.ts COMMAND_DESCRIPTIONS if present (best-effort)

Wired into scripts/gen-skill-docs.ts as a post-step so it regenerates

on every `bun run gen:skill-docs` (the same script that re-emits all

SKILL.md files). Failures are non-fatal warnings, not build breaks —

the generator never blocks SKILL.md regen.

Strict mode (--strict, also used by tests) throws when a skill is

missing name or description in its frontmatter, catching missing

metadata before it ships.

Tests: shape (top-level sections, sort order, single-line summary

discipline), every-skill-and-command-appears, strict-mode rejection of

incomplete frontmatter, and freshness check that the committed

gstack/llms.txt matches what the generator produces now.

Co-Authored-By: Claude Opus 4.7 (1M context) <noreply@anthropic.com>

* feat(browse): --navigate flag on download for browser-triggered files

Adds the --navigate strategy from community PR #1355 (originally from

@garrytan-agents). When set, download navigates to the URL with

waitUntil:'commit' and captures the resulting browser download via

page.waitForEvent('download'), then saves via download.saveAs().

Handles URLs that trigger files via Content-Disposition headers,

multi-hop CDN redirects requiring browser cookies, or anti-bot CDN

chains where page.request.fetch() can't follow the auth/redirect

chain.

Defaults still use the existing direct-fetch strategy. --navigate is

opt-in.

Goes through the same validateNavigationUrl SSRF gate as goto, so

download --navigate cannot reach IPv4 metadata endpoints (AWS IMDSv1,

GCP/Azure equivalents) or arbitrary internal hosts.

Inferred content type from suggested filename for common extensions

(epub, pdf, zip, gz, mp3/mp4, jpg/jpeg/png, txt, html, json) — falls

back to application/octet-stream. Same 200MB cap as Strategy 1.

Frames the use case generically (anti-bot CDN, Content-Disposition,

redirect chains) rather than naming any specific site, per project

voice rules.

Co-Authored-By: @garrytan-agents

Co-Authored-By: Claude Opus 4.7 (1M context) <noreply@anthropic.com>

* docs: v1.28.0.0 — browse SKILL section + VERSION + CHANGELOG

VERSION 1.27.1.0 → 1.28.0.0 (MINOR — substantial new capability:

five new flags/features, ~600 LOC added, new socks dep, multiple

new modules).

browse/SKILL.md.tmpl: new "Headed Mode + Proxy + Anti-Bot Sites"

section between User Handoff and Snapshot Flags. Documents

--headed (auto-Xvfb on Linux), --proxy (with embedded SOCKS5

bridge for auth), download --navigate, the cred-mixing policy,

daemon-discipline (refuse-on-mismatch), the narrowed

webdriver-only stealth, container support caveats, and the

fail-fast/no-retry failure modes.

CHANGELOG entry follows the release-summary format from CLAUDE.md:

two-line headline, lead paragraph, "The numbers that matter"

table tied to specific test files that prove each capability,

"What this means for AI agents" closing tied to a real workflow

shift, then itemized Added/Changed/Fixed/For-contributors

sections.

Browse SKILL.md regenerated via bun run gen:skill-docs.

gstack/llms.txt regenerated automatically from the same pipeline.

Co-Authored-By: Claude Opus 4.7 (1M context) <noreply@anthropic.com>

* test(browse): integration coverage for daemon mismatch + proxy fail-fast

Adds two integration tests that exercise the full process boundary,

not just the module-level wiring.

daemon-mismatch-refuse.test.ts (D2):

- Stubs a healthy state file with a fake configHash and a fake /health

HTTP server, runs the actual cli.ts binary with a mismatching

--proxy, asserts exit 1 + 'different config' / 'browse disconnect'

hint in stderr.

- Same shape with the plain-daemon-meets---headed case.

- Positive case: matching configHash → CLI does NOT emit the mismatch

hint (regardless of whether the actual command succeeds).

server-proxy-fail-fast.test.ts:

- Starts the rejecting SOCKS5 upstream, spawns server.ts with

BROWSE_PROXY_URL pointing at it, BROWSE_HEADLESS_SKIP=1 to skip

Chromium launch.

- Asserts exit 1, 'FAIL upstream' in stderr (testUpstream pre-flight

ran), no raw credential leakage in any output (redaction works on

the failure path), and exit within 30s upper bound.

Both tests use the existing spawn-bun-cli pattern from

commands.test.ts so they run on the same CI infrastructure as the

rest of the bun test suite.

Co-Authored-By: Claude Opus 4.7 (1M context) <noreply@anthropic.com>

* fix(gen-skill-docs): keep module sync so test require() still works

Two regressions caught by the full test suite after the v1.28.0.0

landing pass:

1) package.json version mismatch — VERSION was bumped to 1.28.0.0

but package.json still pinned to 1.27.1.0.

test/gen-skill-docs.test.ts asserts they match.

2) Top-level await in scripts/gen-llms-txt.ts (CLI entry block) and

scripts/gen-skill-docs.ts (post-step) made gen-skill-docs an

async module. test/gen-skill-docs.test.ts uses require() to pull

extractVoiceTriggers/processVoiceTriggers from gen-skill-docs,

which Bun rejects on async modules with:

"TypeError: require() async module ... unsupported.

use 'await import()' instead."

Fix: wrap the await blocks in void IIFEs so the modules remain sync

from a require() perspective.

After fix: all 379 gen-skill-docs tests pass, all 77 new feature

tests pass (3 skipped on macOS — Linux+Xvfb gates).

Co-Authored-By: Claude Opus 4.7 (1M context) <noreply@anthropic.com>

* fix(browse): apply codex adversarial findings on the new lifecycle

Codex outside-voice review caught five real production-failure modes in

the v1.28.0.0 proxy/headed lifecycle. Fixed:

1) `browse disconnect` skip-graceful for proxy-only daemons

(browse/src/cli.ts). The graceful /command POST went out with stray

`domains,` shorthand and (even fixed) the server's disconnect handler

only tears down headed mode — proxy-only daemons returned 200 "Not

in headed mode" while leaving the bridge running. Now disconnect

short-circuits to force-cleanup for non-headed daemons, which kicks

process.on('exit') in server.ts to close the bridge + Xvfb.

2) sendCommand crash retry preserves --proxy / --headed

(browse/src/cli.ts). The ECONNRESET retry path called startServer()

with no extraEnv, silently dropping the proxied flags. A daemon that

died mid-command would silently restart in default direct/headless

mode and bypass the SOCKS bridge. Now reapplies BROWSE_PROXY_URL,

BROWSE_HEADED, and BROWSE_CONFIG_HASH from the resolved global flags.

3) `connect` honors --proxy (browse/src/cli.ts). The headed-mode

`connect` command built its own serverEnv that didn't include

BROWSE_PROXY_URL, so `browse --proxy <url> connect` launched headed

Chromium without the proxy. Now threads proxyUrl + configHash into

the connect serverEnv.

4) SOCKS5 bridge handles fragmented TCP frames

(browse/src/socks-bridge.ts). Previously used once('data') and

parsed each chunk as a complete SOCKS5 frame — TCP doesn't preserve

message boundaries and split greetings/CONNECT requests caused

intermittent handshake failures. Replaced with a single state

machine that buffers chunks and uses size predicates on the SOCKS5

header to know when a complete frame has arrived. Pauses the client

socket during upstream connect and replays any remainder bytes

into the upstream on success.

5) Xvfb cleanup-then-state-delete ordering

(browse/src/server.ts). emergencyCleanup() previously deleted the

state file BEFORE any Xvfb cleanup could read it, orphaning Xvfb

on uncaughtException / unhandledRejection. Now reads the state

file first, calls cleanupXvfb() (which validates cmdline +

start-time before kill), then deletes the state file.

Adds a regression test for #4: writes the SOCKS5 greeting + CONNECT

one byte at a time with 5ms ticks, asserts a clean round trip after

the fragmented handshake.

Codex's sixth finding (bridge advertises NO_AUTH on 127.0.0.1, so any

co-located process can use the authenticated upstream) is documented

as a known limitation — gstack's threat model assumes single-user

hosts. Adding bridge-side auth is a separate change.

Co-Authored-By: Claude Opus 4.7 (1M context) <noreply@anthropic.com>

* docs: update BROWSER.md + TODOS.md for v1.28.0.0

BROWSER.md picks up a "Headed mode + proxy + browser-native downloads

(v1.28.0.0)" subsection inside Real-browser mode plus the new source-map

entries (socks-bridge.ts, proxy-config.ts, proxy-redact.ts, xvfb.ts,

stealth.ts). TODOS.md anti-bot-stealth item updated to reflect the v1.28

narrowing — the "fake plugins" line is no longer accurate.

Co-Authored-By: Claude Opus 4.7 <noreply@anthropic.com>

* fix(ci): include bun.lock in image build for deterministic install

CI evals all failed on PR #1363 with:

error: Could not resolve: "smart-buffer". Maybe you need to "bun install"?

error: Could not resolve: "ip-address". Maybe you need to "bun install"?

at /opt/node_modules_cache/socks/build/client/socksclient.js:15

The cached node_modules layer in the pre-baked Docker image had

`socks` (the new dep) but was missing its transitive deps (smart-buffer,

ip-address). The image build copied only package.json into the build

context — without bun.lock, `bun install` resolved a different tree

than local `bun install` did, dropping required transitive deps.

Reproduces locally as 229 packages (correct) when bun.lock is present

or absent. Why CI diverged isn't fully understood — possibly Docker

layer cache reuse across image rebuilds — but the deterministic fix is

to include the lockfile in the image build context and use

`--frozen-lockfile`, matching what every CI doc recommends.

Changes:

- .github/docker/Dockerfile.ci: COPY bun.lock alongside package.json,

switch `bun install` → `bun install --frozen-lockfile` so any future

lockfile drift fails loudly during image build instead of producing

a partially-installed cache that breaks downstream eval jobs.

- .github/workflows/evals.yml: include bun.lock in the image-tag hash

so adding/removing a dep invalidates the image, AND copy bun.lock

into the docker context alongside package.json.

- .github/workflows/evals-periodic.yml: same updates.

- .github/workflows/ci-image.yml: rebuild trigger now fires on bun.lock

changes too; build context includes bun.lock.

Image hash changes → fresh image gets built on next CI run → install

matches the lockfile exactly → no missing transitive deps.

Co-Authored-By: Claude Opus 4.7 (1M context) <noreply@anthropic.com>

* fix(ci): use hardlink copy instead of symlink for node_modules cache

After the bun.lock fix landed, the eval matrix STILL failed identically:

Could not resolve: "smart-buffer" / "ip-address"

at /opt/node_modules_cache/socks/build/client/socksclient.js

But the hash-tagged image actually contains smart-buffer + ip-address +

socks all flat in /opt/node_modules_cache (verified by pulling and

inspecting the image). 207 packages, all present.

Root cause: the workflow used `ln -s /opt/node_modules_cache node_modules`

to restore deps. Bun build (and Node module resolution generally) walks

a file's realpath to find sibling deps. From the symlinked

/workspace/node_modules/socks/build/client/socksclient.js, realpath

resolves to /opt/node_modules_cache/socks/build/client/socksclient.js,

and walking up to find a node_modules/smart-buffer dir fails — there's

no `node_modules` segment in the realpath.

Switch `ln -s` → `cp -al` (hardlink-copy). Each file in the cache becomes

a hardlink at /workspace/node_modules/<pkg>, sharing inodes (no data

copy). Realpath of /workspace/node_modules/socks/.../socksclient.js

stays inside /workspace/node_modules, so sibling deps resolve correctly.

Speed is comparable to symlink — `cp -al` on ~200 packages on tmpfs is

sub-second. Same caching story preserved.

Both evals.yml and evals-periodic.yml updated.

Co-Authored-By: Claude Opus 4.7 (1M context) <noreply@anthropic.com>

* fix(ci): cp -r instead of cp -al — /opt and /workspace are different filesystems

The hardlink-copy fix landed and immediately broke with:

cp: cannot create hard link 'node_modules/<file>' to

'/opt/node_modules_cache/<file>': Invalid cross-device link

GitHub Actions runners mount the workspace volume at /workspace

(overlay-fs layered onto the runner image), and /opt is the runner

image's own filesystem. Cross-filesystem hardlinks aren't supported.

Switch `cp -al` → `cp -r`. Cost: ~5s for ~200 packages of small JS

files vs ~0s for the broken symlink. Still cheaper than the ~15s

`bun install` fallback. Realpath of /workspace/node_modules/<pkg>/...

stays inside /workspace, so bun build's sibling-dep resolution works.

Both evals.yml and evals-periodic.yml updated.

Co-Authored-By: Claude Opus 4.7 (1M context) <noreply@anthropic.com>

---------

Co-authored-by: Claude Opus 4.7 (1M context) <noreply@anthropic.com>

gstack

"I don't think I've typed like a line of code probably since December, basically, which is an extremely large change." — Andrej Karpathy, No Priors podcast, March 2026

When I heard Karpathy say this, I wanted to find out how. How does one person ship like a team of twenty? Peter Steinberger built OpenClaw — 247K GitHub stars — essentially solo with AI agents. The revolution is here. A single builder with the right tooling can move faster than a traditional team.

I'm Garry Tan, President & CEO of Y Combinator. I've worked with thousands of startups — Coinbase, Instacart, Rippling — when they were one or two people in a garage. Before YC, I was one of the first eng/PM/designers at Palantir, cofounded Posterous (sold to Twitter), and built Bookface, YC's internal social network.

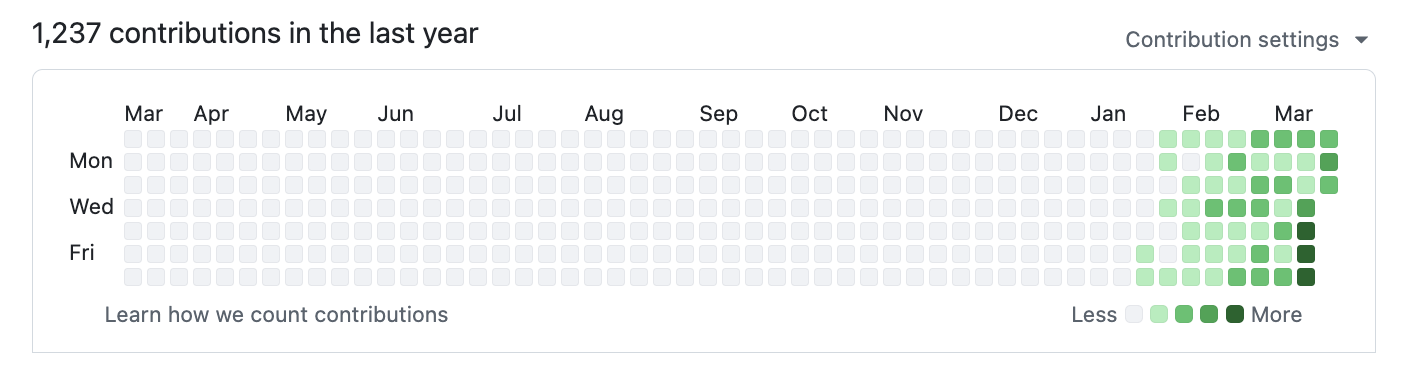

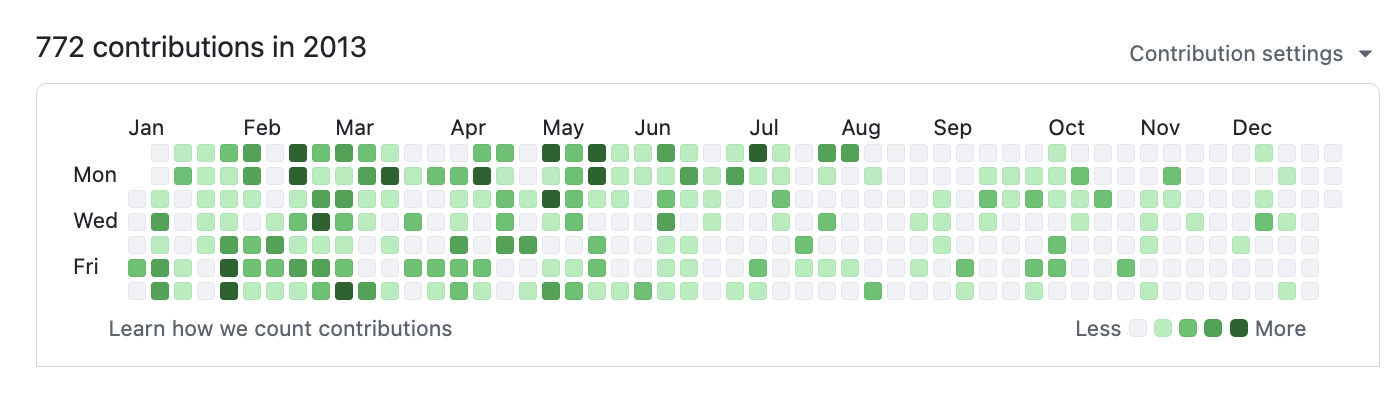

gstack is my answer. I've been building products for twenty years, and right now I'm shipping more products than I ever have. In the last 60 days: 3 production services, 40+ shipped features, part-time, while running YC full-time. On logical code change — not raw LOC, which AI inflates — my 2026 run rate is ~810× my 2013 pace (11,417 vs 14 logical lines/day). Year-to-date (through April 18), 2026 has already produced 240× the entire 2013 year. Measured across 40 public + private garrytan/* repos including Bookface, after excluding one demo repo. AI wrote most of it. The point isn't who typed it, it's what shipped.

The LOC critics aren't wrong that raw line counts inflate with AI. They are wrong that normalized-for-inflation, I'm less productive. I'm more productive, by a lot. Full methodology, caveats, and reproduction script: On the LOC Controversy.

2026 — 1,237 contributions and counting:

2013 — when I built Bookface at YC (772 contributions):

Same person. Different era. The difference is the tooling.

gstack is how I do it. It turns Claude Code into a virtual engineering team — a CEO who rethinks the product, an eng manager who locks architecture, a designer who catches AI slop, a reviewer who finds production bugs, a QA lead who opens a real browser, a security officer who runs OWASP + STRIDE audits, and a release engineer who ships the PR. Twenty-three specialists and eight power tools, all slash commands, all Markdown, all free, MIT license.

This is my open source software factory. I use it every day. I'm sharing it because these tools should be available to everyone.

Fork it. Improve it. Make it yours. And if you want to hate on free open source software — you're welcome to, but I'd rather you just try it first.

Who this is for:

- Founders and CEOs — especially technical ones who still want to ship

- First-time Claude Code users — structured roles instead of a blank prompt

- Tech leads and staff engineers — rigorous review, QA, and release automation on every PR

Quick start

- Install gstack (30 seconds — see below)

- Run

/office-hours— describe what you're building - Run

/plan-ceo-reviewon any feature idea - Run

/reviewon any branch with changes - Run

/qaon your staging URL - Stop there. You'll know if this is for you.

Install — 30 seconds

Requirements: Claude Code, Git, Bun v1.0+, Node.js (Windows only)

Step 1: Install on your machine

Open Claude Code and paste this. Claude does the rest.

Install gstack: run

git clone --single-branch --depth 1 https://github.com/garrytan/gstack.git ~/.claude/skills/gstack && cd ~/.claude/skills/gstack && ./setupthen add a "gstack" section to CLAUDE.md that says to use the /browse skill from gstack for all web browsing, never use mcp__claude-in-chrome__* tools, and lists the available skills: /office-hours, /plan-ceo-review, /plan-eng-review, /plan-design-review, /design-consultation, /design-shotgun, /design-html, /review, /ship, /land-and-deploy, /canary, /benchmark, /browse, /connect-chrome, /qa, /qa-only, /design-review, /setup-browser-cookies, /setup-deploy, /setup-gbrain, /retro, /investigate, /document-release, /codex, /cso, /autoplan, /plan-devex-review, /devex-review, /careful, /freeze, /guard, /unfreeze, /gstack-upgrade, /learn. Then ask the user if they also want to add gstack to the current project so teammates get it.

Step 2: Team mode — auto-update for shared repos (recommended)

From inside your repo, paste this. Switches you to team mode, bootstraps the repo so teammates get gstack automatically, and commits the change:

(cd ~/.claude/skills/gstack && ./setup --team) && ~/.claude/skills/gstack/bin/gstack-team-init required && git add .claude/ CLAUDE.md && git commit -m "require gstack for AI-assisted work"

No vendored files in your repo, no version drift, no manual upgrades. Every Claude Code session starts with a fast auto-update check (throttled to once/hour, network-failure-safe, completely silent).

Swap required for optional if you'd rather nudge teammates than block them.

OpenClaw

OpenClaw spawns Claude Code sessions via ACP, so every gstack skill just works when Claude Code has gstack installed. Paste this to your OpenClaw agent:

Install gstack: run

git clone --single-branch --depth 1 https://github.com/garrytan/gstack.git ~/.claude/skills/gstack && cd ~/.claude/skills/gstack && ./setupto install gstack for Claude Code. Then add a "Coding Tasks" section to AGENTS.md that says: when spawning Claude Code sessions for coding work, tell the session to use gstack skills. Include these examples — security audit: "Load gstack. Run /cso", code review: "Load gstack. Run /review", QA test a URL: "Load gstack. Run /qa https://...", build a feature end-to-end: "Load gstack. Run /autoplan, implement the plan, then run /ship", plan before building: "Load gstack. Run /office-hours then /autoplan. Save the plan, don't implement."

After setup, just talk to your OpenClaw agent naturally:

| You say | What happens |

|---|---|

| "Fix the typo in README" | Simple — Claude Code session, no gstack needed |

| "Run a security audit on this repo" | Spawns Claude Code with Run /cso |

| "Build me a notifications feature" | Spawns Claude Code with /autoplan → implement → /ship |

| "Help me plan the v2 API redesign" | Spawns Claude Code with /office-hours → /autoplan, saves plan |

See docs/OPENCLAW.md for advanced dispatch routing and the gstack-lite/gstack-full prompt templates.

Native OpenClaw Skills (via ClawHub)

Four methodology skills that work directly in your OpenClaw agent, no Claude Code session needed. Install from ClawHub:

clawhub install gstack-openclaw-office-hours gstack-openclaw-ceo-review gstack-openclaw-investigate gstack-openclaw-retro

| Skill | What it does |

|---|---|

gstack-openclaw-office-hours |

Product interrogation with 6 forcing questions |

gstack-openclaw-ceo-review |

Strategic challenge with 4 scope modes |

gstack-openclaw-investigate |

Root cause debugging methodology |

gstack-openclaw-retro |

Weekly engineering retrospective |

These are conversational skills. Your OpenClaw agent runs them directly via chat.

Other AI Agents

gstack works on 10 AI coding agents, not just Claude. Setup auto-detects which agents you have installed:

git clone --single-branch --depth 1 https://github.com/garrytan/gstack.git ~/gstack

cd ~/gstack && ./setup

Or target a specific agent with ./setup --host <name>:

| Agent | Flag | Skills install to |

|---|---|---|

| OpenAI Codex CLI | --host codex |

~/.codex/skills/gstack-*/ |

| OpenCode | --host opencode |

~/.config/opencode/skills/gstack-*/ |

| Cursor | --host cursor |

~/.cursor/skills/gstack-*/ |

| Factory Droid | --host factory |

~/.factory/skills/gstack-*/ |

| Slate | --host slate |

~/.slate/skills/gstack-*/ |

| Kiro | --host kiro |

~/.kiro/skills/gstack-*/ |

| Hermes | --host hermes |

~/.hermes/skills/gstack-*/ |

| GBrain (mod) | --host gbrain |

~/.gbrain/skills/gstack-*/ |

Want to add support for another agent? See docs/ADDING_A_HOST.md. It's one TypeScript config file, zero code changes.

See it work

You: I want to build a daily briefing app for my calendar.

You: /office-hours

Claude: [asks about the pain — specific examples, not hypotheticals]

You: Multiple Google calendars, events with stale info, wrong locations.

Prep takes forever and the results aren't good enough...

Claude: I'm going to push back on the framing. You said "daily briefing

app." But what you actually described is a personal chief of

staff AI.

[extracts 5 capabilities you didn't realize you were describing]

[challenges 4 premises — you agree, disagree, or adjust]

[generates 3 implementation approaches with effort estimates]

RECOMMENDATION: Ship the narrowest wedge tomorrow, learn from

real usage. The full vision is a 3-month project — start with

the daily briefing that actually works.

[writes design doc → feeds into downstream skills automatically]

You: /plan-ceo-review

[reads the design doc, challenges scope, runs 10-section review]

You: /plan-eng-review

[ASCII diagrams for data flow, state machines, error paths]

[test matrix, failure modes, security concerns]

You: Approve plan. Exit plan mode.

[writes 2,400 lines across 11 files. ~8 minutes.]

You: /review

[AUTO-FIXED] 2 issues. [ASK] Race condition → you approve fix.

You: /qa https://staging.myapp.com

[opens real browser, clicks through flows, finds and fixes a bug]

You: /ship

Tests: 42 → 51 (+9 new). PR: github.com/you/app/pull/42

You said "daily briefing app." The agent said "you're building a chief of staff AI" — because it listened to your pain, not your feature request. Eight commands, end to end. That is not a copilot. That is a team.

The sprint

gstack is a process, not a collection of tools. The skills run in the order a sprint runs:

Think → Plan → Build → Review → Test → Ship → Reflect

Each skill feeds into the next. /office-hours writes a design doc that /plan-ceo-review reads. /plan-eng-review writes a test plan that /qa picks up. /review catches bugs that /ship verifies are fixed. Nothing falls through the cracks because every step knows what came before it.

| Skill | Your specialist | What they do |

|---|---|---|

/office-hours |

YC Office Hours | Start here. Six forcing questions that reframe your product before you write code. Pushes back on your framing, challenges premises, generates implementation alternatives. Design doc feeds into every downstream skill. |

/plan-ceo-review |

CEO / Founder | Rethink the problem. Find the 10-star product hiding inside the request. Four modes: Expansion, Selective Expansion, Hold Scope, Reduction. |

/plan-eng-review |

Eng Manager | Lock in architecture, data flow, diagrams, edge cases, and tests. Forces hidden assumptions into the open. |

/plan-design-review |

Senior Designer | Rates each design dimension 0-10, explains what a 10 looks like, then edits the plan to get there. AI Slop detection. Interactive — one AskUserQuestion per design choice. |

/plan-devex-review |

Developer Experience Lead | Interactive DX review: explores developer personas, benchmarks against competitors' TTHW, designs your magical moment, traces friction points step by step. Three modes: DX EXPANSION, DX POLISH, DX TRIAGE. 20-45 forcing questions. |

/design-consultation |

Design Partner | Build a complete design system from scratch. Researches the landscape, proposes creative risks, generates realistic product mockups. |

/review |

Staff Engineer | Find the bugs that pass CI but blow up in production. Auto-fixes the obvious ones. Flags completeness gaps. |

/investigate |

Debugger | Systematic root-cause debugging. Iron Law: no fixes without investigation. Traces data flow, tests hypotheses, stops after 3 failed fixes. |

/design-review |

Designer Who Codes | Same audit as /plan-design-review, then fixes what it finds. Atomic commits, before/after screenshots. |

/devex-review |

DX Tester | Live developer experience audit. Actually tests your onboarding: navigates docs, tries the getting started flow, times TTHW, screenshots errors. Compares against /plan-devex-review scores — the boomerang that shows if your plan matched reality. |

/design-shotgun |

Design Explorer | "Show me options." Generates 4-6 AI mockup variants, opens a comparison board in your browser, collects your feedback, and iterates. Taste memory learns what you like. Repeat until you love something, then hand it to /design-html. |

/design-html |

Design Engineer | Turn a mockup into production HTML that actually works. Pretext computed layout: text reflows, heights adjust, layouts are dynamic. 30KB, zero deps. Detects React/Svelte/Vue. Smart API routing per design type (landing page vs dashboard vs form). The output is shippable, not a demo. |

/qa |

QA Lead | Test your app, find bugs, fix them with atomic commits, re-verify. Auto-generates regression tests for every fix. |

/qa-only |

QA Reporter | Same methodology as /qa but report only. Pure bug report without code changes. |

/pair-agent |

Multi-Agent Coordinator | Share your browser with any AI agent. One command, one paste, connected. Works with OpenClaw, Hermes, Codex, Cursor, or anything that can curl. Each agent gets its own tab. Auto-launches headed mode so you watch everything. Auto-starts ngrok tunnel for remote agents. Scoped tokens, tab isolation, rate limiting, activity attribution. |

/cso |

Chief Security Officer | OWASP Top 10 + STRIDE threat model. Zero-noise: 17 false positive exclusions, 8/10+ confidence gate, independent finding verification. Each finding includes a concrete exploit scenario. |

/ship |

Release Engineer | Sync main, run tests, audit coverage, push, open PR. Bootstraps test frameworks if you don't have one. |

/land-and-deploy |

Release Engineer | Merge the PR, wait for CI and deploy, verify production health. One command from "approved" to "verified in production." |

/canary |

SRE | Post-deploy monitoring loop. Watches for console errors, performance regressions, and page failures. |

/benchmark |

Performance Engineer | Baseline page load times, Core Web Vitals, and resource sizes. Compare before/after on every PR. |

/document-release |

Technical Writer | Update all project docs to match what you just shipped. Catches stale READMEs automatically. |

/retro |

Eng Manager | Team-aware weekly retro. Per-person breakdowns, shipping streaks, test health trends, growth opportunities. /retro global runs across all your projects and AI tools (Claude Code, Codex, Gemini). |

/browse |

QA Engineer | Give the agent eyes. Real Chromium browser, real clicks, real screenshots. ~100ms per command. /open-gstack-browser launches GStack Browser with sidebar, anti-bot stealth, and auto model routing. |

/setup-browser-cookies |

Session Manager | Import cookies from your real browser (Chrome, Arc, Brave, Edge) into the headless session. Test authenticated pages. |

/autoplan |

Review Pipeline | One command, fully reviewed plan. Runs CEO → design → eng review automatically with encoded decision principles. Surfaces only taste decisions for your approval. |

/learn |

Memory | Manage what gstack learned across sessions. Review, search, prune, and export project-specific patterns, pitfalls, and preferences. Learnings compound across sessions so gstack gets smarter on your codebase over time. |

Which review should I use?

| Building for... | Plan stage (before code) | Live audit (after shipping) |

|---|---|---|

| End users (UI, web app, mobile) | /plan-design-review |

/design-review |

| Developers (API, CLI, SDK, docs) | /plan-devex-review |

/devex-review |

| Architecture (data flow, perf, tests) | /plan-eng-review |

/review |

| All of the above | /autoplan (runs CEO → design → eng → DX, auto-detects which apply) |

— |

Power tools

| Skill | What it does |

|---|---|

/codex |

Second Opinion — independent code review from OpenAI Codex CLI. Three modes: review (pass/fail gate), adversarial challenge, and open consultation. Cross-model analysis when both /review and /codex have run. |

/careful |

Safety Guardrails — warns before destructive commands (rm -rf, DROP TABLE, force-push). Say "be careful" to activate. Override any warning. |

/freeze |

Edit Lock — restrict file edits to one directory. Prevents accidental changes outside scope while debugging. |

/guard |

Full Safety — /careful + /freeze in one command. Maximum safety for prod work. |

/unfreeze |

Unlock — remove the /freeze boundary. |

/open-gstack-browser |

GStack Browser — launch GStack Browser with sidebar, anti-bot stealth, auto model routing (Sonnet for actions, Opus for analysis), one-click cookie import, and Claude Code integration. Clean up pages, take smart screenshots, edit CSS, and pass info back to your terminal. |

/setup-deploy |

Deploy Configurator — one-time setup for /land-and-deploy. Detects your platform, production URL, and deploy commands. |

/setup-gbrain |

GBrain Onboarding — from zero to running gbrain in under 5 minutes. PGLite local, Supabase existing URL, or auto-provision a new Supabase project via Management API. MCP registration for Claude Code + per-repo trust triad (read-write/read-only/deny). Full guide. |

/sync-gbrain |

Keep Brain Current — re-index this repo's code into gbrain via gbrain sources add + gbrain sync --strategy code, refresh the ## GBrain Search Guidance block in CLAUDE.md, and auto-remove guidance when the capability check fails. --incremental (default), --full, --dry-run. Idempotent; safe to re-run. |

/gstack-upgrade |

Self-Updater — upgrade gstack to latest. Detects global vs vendored install, syncs both, shows what changed. |

New binaries (v0.19)

Beyond the slash-command skills, gstack ships standalone CLIs for workflows that don't belong inside a session:

| Command | What it does |

|---|---|

gstack-model-benchmark |

Cross-model benchmark — run the same prompt through Claude, GPT (via Codex CLI), and Gemini; compare latency, tokens, cost, and (optionally) LLM-judge quality score. Auth detected per provider, unavailable providers skip cleanly. Output as table, JSON, or markdown. --dry-run validates flags + auth without spending API calls. |

gstack-taste-update |

Design taste learning — writes approvals and rejections from /design-shotgun into a persistent per-project taste profile. Decays 5%/week. Feeds back into future variant generation so the system learns what you actually pick. |

Continuous checkpoint mode (opt-in, local by default)

Set gstack-config set checkpoint_mode continuous and skills auto-commit your work as you go with a WIP: prefix plus a structured [gstack-context] body (decisions, remaining work, failed approaches). Survives crashes and context switches. /context-restore reads those commits to reconstruct session state. /ship filter-squashes WIP commits before the PR (preserving non-WIP commits) so bisect stays clean. Push is opt-in via checkpoint_push=true — default is local-only so you don't trigger CI on every WIP commit.

Domain skills + raw CDP escape hatch

Two new browser primitives compound the gstack agent over time:

$B domain-skill save— agent saves a per-site note (e.g., "LinkedIn's Apply button lives in an iframe") that fires automatically next time it visits that hostname. Quarantined → active after 3 successful uses → optional cross-project promotion via$B domain-skill promote-to-global. Storage lives alongside/learn's per-project learnings file. Full reference: docs/domain-skills.md.$B cdp <Domain.method>— raw Chrome DevTools Protocol escape hatch for the rare case curated commands miss. Deny-default: methods must be explicitly added tobrowse/src/cdp-allowlist.tswith a one-line justification. Two-tier mutex serializes browser-scoped CDP calls against per-tab work. Output for data-exfil methods is wrapped in the UNTRUSTED envelope.

Want raw CDP with no rails, no allowlist, no daemon — just thin transport from agent to Chrome? browser-use/browser-harness-js is a different philosophy (agent-authored helpers vs gstack's curated commands) and a good fit if you don't want gstack's security stack. The two can coexist: gstack's

$B cdpand harness can both attach to the same Chrome via Playwright'snewCDPSession.

Deep dives with examples and philosophy for every skill →

Karpathy's four failure modes? Already covered.

Andrej Karpathy's AI coding rules (17K stars) nail four failure modes: wrong assumptions, overcomplexity, orthogonal edits, imperative over declarative. gstack's workflow skills enforce all four. /office-hours forces assumptions into the open before code is written. The Confusion Protocol stops Claude from guessing on architectural decisions. /review catches unnecessary complexity and drive-by edits. /ship transforms tasks into verifiable goals with test-first execution. If you already use Karpathy-style CLAUDE.md rules, gstack is the workflow enforcement layer that makes them stick across entire sprints, not just single prompts.

Parallel sprints

gstack works well with one sprint. It gets interesting with ten running at once.

Design is at the heart. /design-consultation builds your design system from scratch, researches what's out there, proposes creative risks, and writes DESIGN.md. But the real magic is the shotgun-to-HTML pipeline.

/design-shotgun is how you explore. You describe what you want. It generates 4-6 AI mockup variants using GPT Image. Then it opens a comparison board in your browser with all variants side by side. You pick favorites, leave feedback ("more whitespace", "bolder headline", "lose the gradient"), and it generates a new round. Repeat until you love something. Taste memory kicks in after a few rounds so it starts biasing toward what you actually like. No more describing your vision in words and hoping the AI gets it. You see options, pick the good ones, and iterate visually.

/design-html makes it real. Take that approved mockup (from /design-shotgun, a CEO plan, a design review, or just a description) and turn it into production-quality HTML/CSS. Not the kind of AI HTML that looks fine at one viewport width and breaks everywhere else. This uses Pretext for computed text layout: text actually reflows on resize, heights adjust to content, layouts are dynamic. 30KB overhead, zero dependencies. It detects your framework (React, Svelte, Vue) and outputs the right format. Smart API routing picks different Pretext patterns depending on whether it's a landing page, dashboard, form, or card layout. The output is something you'd actually ship, not a demo.

/qa was a massive unlock. It let me go from 6 to 12 parallel workers. Claude Code saying "I SEE THE ISSUE" and then actually fixing it, generating a regression test, and verifying the fix — that changed how I work. The agent has eyes now.

Smart review routing. Just like at a well-run startup: CEO doesn't have to look at infra bug fixes, design review isn't needed for backend changes. gstack tracks what reviews are run, figures out what's appropriate, and just does the smart thing. The Review Readiness Dashboard tells you where you stand before you ship.

Test everything. /ship bootstraps test frameworks from scratch if your project doesn't have one. Every /ship run produces a coverage audit. Every /qa bug fix generates a regression test. 100% test coverage is the goal — tests make vibe coding safe instead of yolo coding.

/document-release is the engineer you never had. It reads every doc file in your project, cross-references the diff, and updates everything that drifted. README, ARCHITECTURE, CONTRIBUTING, CLAUDE.md, TODOS — all kept current automatically. And now /ship auto-invokes it — docs stay current without an extra command.

Real browser mode. /open-gstack-browser launches GStack Browser, an AI-controlled Chromium with anti-bot stealth, custom branding, and the sidebar extension baked in. Sites like Google and NYTimes work without captchas. The menu bar says "GStack Browser" instead of "Chrome for Testing." Your regular Chrome stays untouched. All existing browse commands work unchanged. $B disconnect returns to headless. The browser stays alive as long as the window is open... no idle timeout killing it while you're working.

Sidebar agent — your AI browser assistant. Type natural language in the Chrome side panel and a child Claude instance executes it. "Navigate to the settings page and screenshot it." "Fill out this form with test data." "Go through every item in this list and extract the prices." The sidebar auto-routes to the right model: Sonnet for fast actions (click, navigate, screenshot) and Opus for reading and analysis. Each task gets up to 5 minutes. The sidebar agent runs in an isolated session, so it won't interfere with your main Claude Code window. One-click cookie import right from the sidebar footer.

Personal automation. The sidebar agent isn't just for dev workflows. Example: "Browse my kid's school parent portal and add all the other parents' names, phone numbers, and photos to my Google Contacts." Two ways to get authenticated: (1) log in once in the headed browser, your session persists, or (2) click the "cookies" button in the sidebar footer to import cookies from your real Chrome. Once authenticated, Claude navigates the directory, extracts the data, and creates the contacts.

Prompt injection defense. Hostile web pages try to hijack your sidebar agent. gstack ships a layered defense: a 22MB ML classifier bundled with the browser scans every page and tool output locally, a Claude Haiku transcript check votes on the full conversation shape, a random canary token in the system prompt catches session exfil attempts across text, tool args, URLs, and file writes, and a verdict combiner requires two classifiers to agree before blocking (prevents single-model false positives on Stack Overflow-style instruction pages). A shield icon in the sidebar header shows status (green/amber/red). Opt in to a 721MB DeBERTa-v3 ensemble via GSTACK_SECURITY_ENSEMBLE=deberta for 2-of-3 agreement. Emergency kill switch: GSTACK_SECURITY_OFF=1. See ARCHITECTURE.md for the full stack.

Browser handoff when the AI gets stuck. Hit a CAPTCHA, auth wall, or MFA prompt? $B handoff opens a visible Chrome at the exact same page with all your cookies and tabs intact. Solve the problem, tell Claude you're done, $B resume picks up right where it left off. The agent even suggests it automatically after 3 consecutive failures.

/pair-agent is cross-agent coordination. You're in Claude Code. You also have OpenClaw running. Or Hermes. Or Codex. You want them both looking at the same website. Type /pair-agent, pick your agent, and a GStack Browser window opens so you can watch. The skill prints a block of instructions. Paste that block into the other agent's chat. It exchanges a one-time setup key for a session token, creates its own tab, and starts browsing. You see both agents working in the same browser, each in their own tab, neither able to interfere with the other. If ngrok is installed, the tunnel starts automatically so the other agent can be on a completely different machine. Same-machine agents get a zero-friction shortcut that writes credentials directly. This is the first time AI agents from different vendors can coordinate through a shared browser with real security: scoped tokens, tab isolation, rate limiting, domain restrictions, and activity attribution.

Multi-AI second opinion. /codex gets an independent review from OpenAI's Codex CLI — a completely different AI looking at the same diff. Three modes: code review with a pass/fail gate, adversarial challenge that actively tries to break your code, and open consultation with session continuity. When both /review (Claude) and /codex (OpenAI) have reviewed the same branch, you get a cross-model analysis showing which findings overlap and which are unique to each.

Safety guardrails on demand. Say "be careful" and /careful warns before any destructive command — rm -rf, DROP TABLE, force-push, git reset --hard. /freeze locks edits to one directory while debugging so Claude can't accidentally "fix" unrelated code. /guard activates both. /investigate auto-freezes to the module being investigated.

Proactive skill suggestions. gstack notices what stage you're in — brainstorming, reviewing, debugging, testing — and suggests the right skill. Don't like it? Say "stop suggesting" and it remembers across sessions.

10-15 parallel sprints

gstack is powerful with one sprint. It is transformative with ten running at once.

Conductor runs multiple Claude Code sessions in parallel — each in its own isolated workspace. One session running /office-hours on a new idea, another doing /review on a PR, a third implementing a feature, a fourth running /qa on staging, and six more on other branches. All at the same time. I regularly run 10-15 parallel sprints — that's the practical max right now.

The sprint structure is what makes parallelism work. Without a process, ten agents is ten sources of chaos. With a process — think, plan, build, review, test, ship — each agent knows exactly what to do and when to stop. You manage them the way a CEO manages a team: check in on the decisions that matter, let the rest run.

Voice input (AquaVoice, Whisper, etc.)

gstack skills have voice-friendly trigger phrases. Say what you want naturally — "run a security check", "test the website", "do an engineering review" — and the right skill activates. You don't need to remember slash command names or acronyms.

Uninstall

Option 1: Run the uninstall script

If gstack is installed on your machine:

~/.claude/skills/gstack/bin/gstack-uninstall

This handles skills, symlinks, global state (~/.gstack/), project-local state, browse daemons, and temp files. Use --keep-state to preserve config and analytics. Use --force to skip confirmation.

Option 2: Manual removal (no local repo)

If you don't have the repo cloned (e.g. you installed via a Claude Code paste and later deleted the clone):

# 1. Stop browse daemons

pkill -f "gstack.*browse" 2>/dev/null || true

# 2. Remove per-skill symlinks pointing into gstack/

find ~/.claude/skills -maxdepth 1 -type l 2>/dev/null | while read -r link; do

case "$(readlink "$link" 2>/dev/null)" in gstack/*|*/gstack/*) rm -f "$link" ;; esac

done

# 3. Remove gstack

rm -rf ~/.claude/skills/gstack

# 4. Remove global state

rm -rf ~/.gstack

# 5. Remove integrations (skip any you never installed)

rm -rf ~/.codex/skills/gstack* 2>/dev/null

rm -rf ~/.factory/skills/gstack* 2>/dev/null

rm -rf ~/.kiro/skills/gstack* 2>/dev/null

rm -rf ~/.openclaw/skills/gstack* 2>/dev/null

# 6. Remove temp files

rm -f /tmp/gstack-* 2>/dev/null

# 7. Per-project cleanup (run from each project root)

rm -rf .gstack .gstack-worktrees .claude/skills/gstack 2>/dev/null

rm -rf .agents/skills/gstack* .factory/skills/gstack* 2>/dev/null

Clean up CLAUDE.md

The uninstall script does not edit CLAUDE.md. In each project where gstack was added, remove the ## gstack and ## Skill routing sections.

Playwright

~/Library/Caches/ms-playwright/ (macOS) is left in place because other tools may share it. Remove it if nothing else needs it.

Free, MIT licensed, open source. No premium tier, no waitlist.

I open sourced how I build software. You can fork it and make it your own.

We're hiring. Want to ship real products at AI-coding speed and help harden gstack? Come work at YC — ycombinator.com/software Extremely competitive salary and equity. San Francisco, Dogpatch District.

GBrain — persistent knowledge for your coding agent

GBrain is a persistent knowledge base for AI agents — think of it as the memory your agent actually keeps between sessions. GStack gives you a one-command path from zero to "it's running, my agent can call it."

/setup-gbrain

Three paths, pick one:

- Supabase, existing URL — your cloud agent already provisioned a brain; paste the Session Pooler URL, now this laptop uses the same data.

- Supabase, auto-provision — paste a Supabase Personal Access Token; the skill creates a new project, polls to healthy, fetches the pooler URL, hands it to

gbrain init. ~90 seconds end-to-end. - PGLite local — zero accounts, zero network, ~30 seconds. Isolated brain on this Mac only. Great for try-first; migrate to Supabase later with

/setup-gbrain --switch.

After init, the skill offers to register gbrain as an MCP server for Claude Code (claude mcp add gbrain -- gbrain serve) so gbrain search, gbrain put_page, etc. show up as first-class typed tools — not bash shell-outs.

Keeping the brain current. Run /sync-gbrain from any repo to re-index its code into gbrain (incremental by default, --full for a full reindex, --dry-run to preview). The skill registers the cwd as a federated source via gbrain sources add, runs gbrain sync --strategy code, and writes a ## GBrain Search Guidance block to your project's CLAUDE.md so the agent prefers gbrain search/code-def/code-refs over Grep. The block is removed automatically if the capability check fails — no stale guidance pointing at tools that aren't installed.

Per-remote trust policy. Each repo on your machine gets one of three tiers:

read-write— agent can search the brain AND write new pages back from this reporead-only— agent can search but never writes (best for multi-client consultants: search the shared brain, don't contaminate it with Client A's work while in Client B's repo)deny— no gbrain interaction at all

The skill asks once per repo. The decision is sticky across worktrees and branches of the same remote.

GStack memory sync (different feature, same private-repo infra). Optionally pushes your gstack state (learnings, CEO plans, design docs, retros, developer profile) to a private git repo so your memory follows you across machines, with a one-time privacy prompt (everything allowlisted / artifacts only / off) and a defense-in-depth secret scanner that blocks AWS keys, tokens, PEM blocks, and JWTs before they leave your machine.

gstack-brain-init

Full monty — every scenario, every flag, every bin helper, every troubleshooting step: USING_GBRAIN_WITH_GSTACK.md

Other references: docs/gbrain-sync.md (sync-specific guide) • docs/gbrain-sync-errors.md (error index)

Docs

| Doc | What it covers |

|---|---|

| Skill Deep Dives | Philosophy, examples, and workflow for every skill (includes Greptile integration) |

| Builder Ethos | Builder philosophy: Boil the Lake, Search Before Building, three layers of knowledge |

| Using GBrain with GStack | Every path, flag, bin helper, and troubleshooting step for /setup-gbrain |

| GBrain Sync | Cross-machine memory setup, privacy modes, troubleshooting |

| Architecture | Design decisions and system internals |

| Browser Reference | Full command reference for /browse |

| Contributing | Dev setup, testing, contributor mode, and dev mode |

| Changelog | What's new in every version |

Privacy & Telemetry

gstack includes opt-in usage telemetry to help improve the project. Here's exactly what happens:

- Default is off. Nothing is sent anywhere unless you explicitly say yes.

- On first run, gstack asks if you want to share anonymous usage data. You can say no.

- What's sent (if you opt in): skill name, duration, success/fail, gstack version, OS. That's it.

- What's never sent: code, file paths, repo names, branch names, prompts, or any user-generated content.

- Change anytime:

gstack-config set telemetry offdisables everything instantly.

Data is stored in Supabase (open source Firebase alternative). The schema is in supabase/migrations/ — you can verify exactly what's collected. The Supabase publishable key in the repo is a public key (like a Firebase API key) — row-level security policies deny all direct access. Telemetry flows through validated edge functions that enforce schema checks, event type allowlists, and field length limits.

Local analytics are always available. Run gstack-analytics to see your personal usage dashboard from the local JSONL file — no remote data needed.

Troubleshooting

Skill not showing up? cd ~/.claude/skills/gstack && ./setup

/browse fails? cd ~/.claude/skills/gstack && bun install && bun run build

Stale install? Run /gstack-upgrade — or set auto_upgrade: true in ~/.gstack/config.yaml

Want shorter commands? cd ~/.claude/skills/gstack && ./setup --no-prefix — switches from /gstack-qa to /qa. Your choice is remembered for future upgrades.

Want namespaced commands? cd ~/.claude/skills/gstack && ./setup --prefix — switches from /qa to /gstack-qa. Useful if you run other skill packs alongside gstack.

Codex says "Skipped loading skill(s) due to invalid SKILL.md"? Your Codex skill descriptions are stale. Fix: cd ~/.codex/skills/gstack && git pull && ./setup --host codex — or for repo-local installs: cd "$(readlink -f .agents/skills/gstack)" && git pull && ./setup --host codex

Windows users: gstack works on Windows 11 via Git Bash or WSL. Node.js is required in addition to Bun — Bun has a known bug with Playwright's pipe transport on Windows (bun#4253). The browse server automatically falls back to Node.js. Make sure both bun and node are on your PATH.

Claude says it can't see the skills? Make sure your project's CLAUDE.md has a gstack section. Add this:

## gstack

Use /browse from gstack for all web browsing. Never use mcp__claude-in-chrome__* tools.

Available skills: /office-hours, /plan-ceo-review, /plan-eng-review, /plan-design-review,

/design-consultation, /design-shotgun, /design-html, /review, /ship, /land-and-deploy,

/canary, /benchmark, /browse, /open-gstack-browser, /qa, /qa-only, /design-review,

/setup-browser-cookies, /setup-deploy, /setup-gbrain, /sync-gbrain, /retro, /investigate, /document-release,

/codex, /cso, /autoplan, /pair-agent, /careful, /freeze, /guard, /unfreeze, /gstack-upgrade, /learn.

License

MIT. Free forever. Go build something.